- Blog

- Home

- Cara Download Game Steam Tanpa Bayar

- Danball Senki W Psp Iso Download Free

- Download Game Ppsspp Resident Evil 7 Iso

- Wired Xbox 360 Controller Driver For Pc

- Usb-if Xhci Usb Host Controller Driver Problem Windows 7

- Wired Xbox 360 Controller Driver For Pc Windows 10

- Xbox One Controller Driver Could Not Install

- Fallout 4 Ps4 Download Free

- Download Game Summoners War Untuk Pc

- How To Download Mysql For Mac

- Debbie Macomber Cedar Cove Series Free Download

- Download Game Call For Duty

- Drake Tell Your Friends Download

- Fine Woodworking Magazine Pdf Download

- Windows 8 Dreamspark Iso Download

- Serato Dj 18 Free Download

- Jabber Emoticons Add On Download

- Champion Gothic Font Free Download

- Sirdar Knitting Patterns To Download

- Where Do You Download Music Reddit

- Download Large Files From Google Drive

- Unfu*k Yourself: Pdf Download Free

- 7 Habits Of Highly Effective Teenagers Pdf Free Download

- Super Mario 64 The Green Stars Download

- Download Game Maker Pokemon Emerald Engine

- Windows Me Lite Iso Download

- Trey Songz New Mixtape Download

- Visual Studio 2008 Express Iso Download

- Ffxiv Ps4 Unable To Download Patch Files 20512 25008 20495

- Hp Elitebook 8470p Universal Serial Bus Controller Driver

- Windows Xp Startup Repair Disc Iso Download

- Download Game Clash Royale Untuk Android

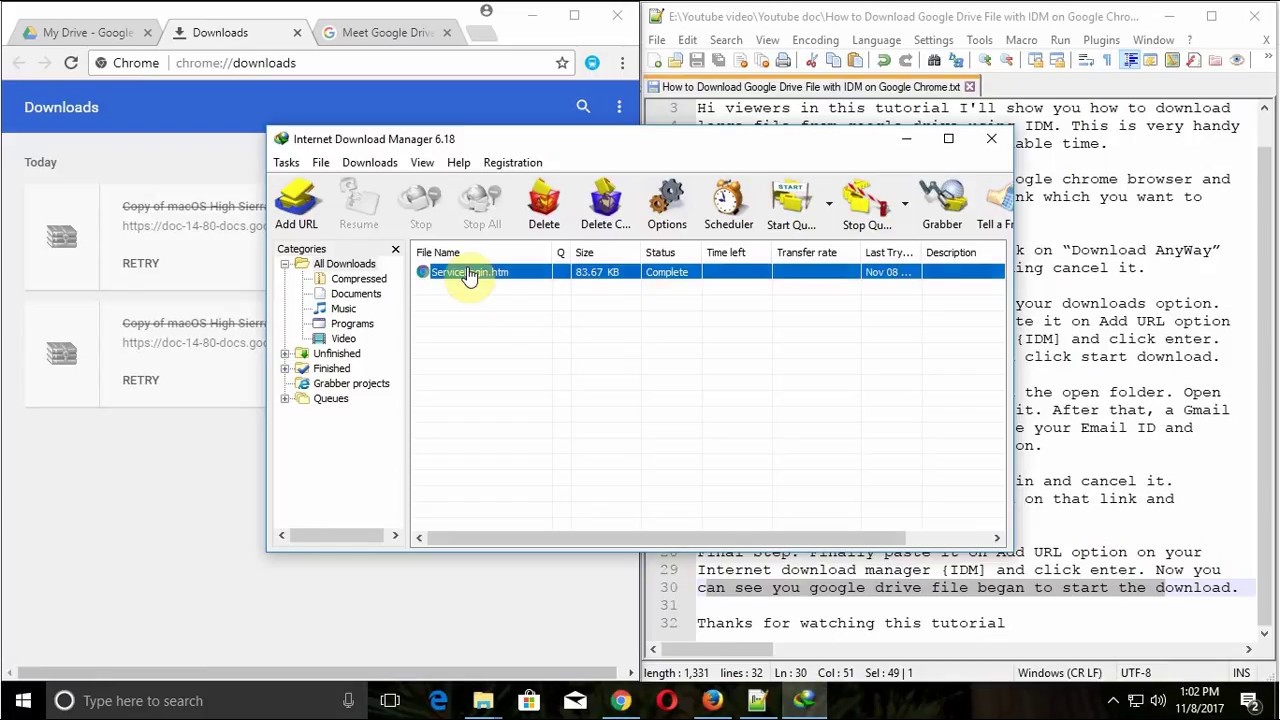

When I download a Google Drive folder shared to me, Chromium automatically downloads it by its default download manager. Problem is the size is really huge. So I got to have some resume support.

- How To Download Large Files From Google Drive

- How To Download Large Files From Google Drive To Ipad

- Download Large Files From Google Drive

How To Download Large Files From Google Drive

gdrive_dl.py

- How to Download Files and Webpages Directly to Google Drive in Chrome Lori Kaufman @howtogeek December 22, 2016, 10:24am EDT We’ve all downloaded files from the web to our computer.

- Jan 16, 2018 - Currently Box has a maximum file download of 15GB. Available Box space (as Google Drive does for shared files) so as to use Box Drive(as.

- With seamless integration, sending big files through email in Gmail is almost as simple as attaching them. Users can set permissions for all files uploaded and sent via Google Drive to ensure that only intended recipients can access and download the shared files. How to Send Large Files in Gmail via Google Drive.

- I uploaded a file on Google Drive, which is 1.3 GB in size. Now when I try to download it through the browser (Firefox), it only downloads 1 GB, and then downloading fails. I tried to copy the downloading link and paste it into a download manager, but then Google redirects to an HTML page 'ServiceLogin' and downloads it instead.

How To Download Large Files From Google Drive To Ipad

| from pydrive.auth import GoogleAuth |

| from pydrive.drive import GoogleDrive |

| ''API calls to download a very large google drive file. The drive API only allows downloading to ram |

| (unlike, say, the Requests library's streaming option) so the files has to be partially downloaded |

| and chunked. Authentication requires a google api key, and a local download of client_secrets.json |

| Thanks to Radek for the key functions: http://stackoverflow.com/questions/27617258/memoryerror-how-to-download-large-file-via-google-drive-sdk-using-python |

| '' |

| defpartial(total_byte_len, part_size_limit): |

| s = [] |

| for p inrange(0, total_byte_len, part_size_limit): |

| last =min(total_byte_len -1, p + part_size_limit -1) |

| s.append([p, last]) |

| return s |

| defGD_download_file(service, file_id): |

| drive_file = service.files().get(fileId=file_id).execute() |

| download_url = drive_file.get('downloadUrl') |

| total_size =int(drive_file.get('fileSize')) |

| s = partial(total_size, 100000000) # I'm downloading BIG files, so 100M chunk size is fine for me |

| title = drive_file.get('title') |

| originalFilename = drive_file.get('originalFilename') |

| filename ='./'+ originalFilename |

| if download_url: |

| withopen(filename, 'wb') asfile: |

| print'Bytes downloaded: ' |

| forbytesin s: |

| headers = {'Range' : 'bytes=%s-%s'% (bytes[0], bytes[1])} |

| resp, content = service._http.request(download_url, headers=headers) |

| if resp.status 206 : |

| file.write(content) |

| file.flush() |

| else: |

| print'An error occurred: %s'% resp |

| returnNone |

| printstr(bytes[1])+'...' |

| return title, filename |

| else: |

| returnNone |

| gauth = GoogleAuth() |

| gauth.CommandLineAuth() #requires cut and paste from a browser |

| FILE_ID='SOMEID'#FileID is the simple file hash, like 0B1NzlxZ5RpdKS0NOS0x0Ym9kR0U |

| drive = GoogleDrive(gauth) |

| service = gauth.service |

| #file = drive.CreateFile({'id':FILE_ID}) # Use this to get file metadata |

| GD_download_file(service, FILE_ID) |

Download Large Files From Google Drive

Sign up for freeto join this conversation on GitHub. Already have an account? Sign in to comment